Openstack setup

Configuration

Following howto here: http://docs.openstack.org/juno/install-guide/install/yum/content/ch_keystone.html Basic configuration is done with cfengine policy (bundle openstack). Adding new databases, users, service endpoints was done manually. Configs for the various openstack services is handled by cfengine according to what type of node a machine is classified as. For the time being, vctl01 is all types of "control" nodes. virt01 and virt02 are hypervisor nodes and network nodes (but not network controllers, that is on vctl01). Created admin and aglt2 tenants. Under aglt2 tenant created 'demo' user. Source /root/tools/admin-openrc.sh for admin credentials. Source aglt2-demo-openrc.sh for aglt2 demo user credentials.Interfacing to ceph

Followed directions here: http://ceph.com/docs/master/rbd/rbd-openstack/ Created pools osvms,osvolumes,osimages,osbackup. After creating users, copied keyring info into appropriate files in cfengine ceph bundle to be distributed to openstack nodes as appropriate. Keyring info can always be retrieved with a command like 'ceph auth get client.cinder' (for example). This info would then need to be in a file /etc/ceph/ceph.client.cinder.keyring on the cinder openstack node. Cfengine will take care of putting appropriate keyring files where they need to go by copying them from the ceph bundle stash. For hypervisor clients running libvirt (aka class OPENSTACK_LIBVIRT in the policy) the cinder secret has to be imported into the libvirt keyring. Cfengine will take care of this by getting the key out of the keyring file installed by the ceph bundle. The uuid defined in the openstack policy var "ceph_secret_uuid" will be used to create an xml file that cfengine will import into libvirt and it is also referenced elsewhere to tell openstack/libvirt the appropriate secret to use. The ceph instructions document the details of what commands the policy will run on libvirt machines. If you change the secret, change the "ceph_secret_uuid" variable and importation of the new secret/uuid combo will be triggered. Any uuid created by "uuidgen" can be used. Since they are tied together somewhat, the ceph bundle references some classes defined in the openstack bundle to trigger the actions noted here.Network config

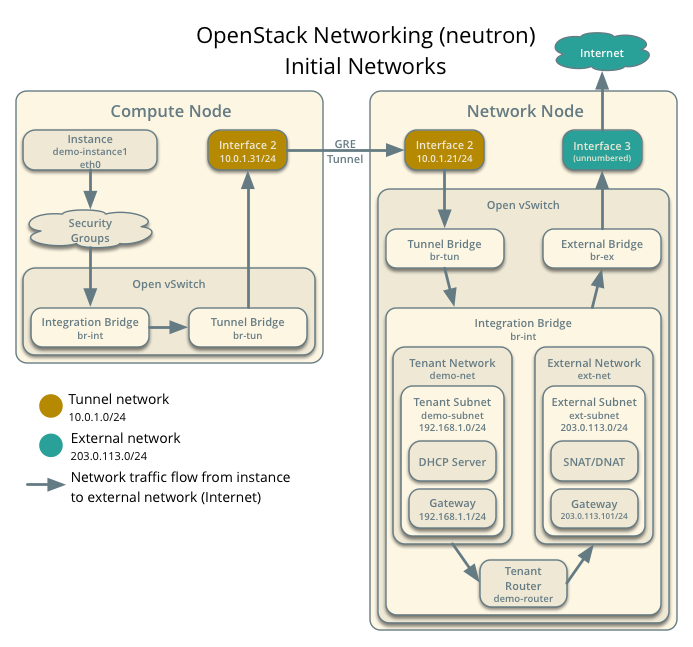

http://docs.openstack.org/juno/install-guide/install/yum/content/neutron-network-node.html This is a complex topic. Our initial network is fairly simple and uses vctl01 as both "network controller" (cfengine: OPENSTACK_NETWORK) and "network node" (OPENSTACK_NETWORK_NODE). The "node" does the actual network routing - it is essentially a switch running openvswitch to create flows. Ultimately the goal is to have all hypervisor nodes also function as redundant network nodes rather than having separate redundant boxes to act as network nodes (I think that is the goal, anyways). It is also possible that it would make sense to have a cluster of nodes dedicated to being virtual software controlled switches in much the same way that we have dedicated hardware switches already. Openvswitch also has agents that can control dedicated hardware switches. There are a lot of ways to fit these pieces together. INSTANCE_TUNNELS_INTERFACE_IP_ADDRESS in the docs is the system private IP. It could be any IP as long as consistently on the same network on all hypervisor and network nodes where it is defined in the ml2_conf.ini file. It is used for vm instances to tunnel from the compute node to the openvswitch instance on the network node. The openvswitch instance handles internal networking, dhcp, routing to external floating IP, etc. Our tunnel network is the private net. Floating IP range, referenced as "ext-subnet" in the howto docs, is as documented in our list of IPs. For our initial testing "br-ex" interface is eno2 on vctl01 - this must be a dedicated interface because it is taken over by the vswitch. I imagine one could have a host interface on the same vswitch to have it also be the system interface but we're not there yet. The bridge is created manually according to howto docs at link above for setting up network node.

Launching Instances

A sample instance is documented here: http://docs.openstack.org/juno/install-guide/install/yum/content/launch-instance-neutron.html Details on creating the instance, opening console, and assigning floating (external) IP are covered. Do all these ops from the vctl01 node as the "demo" user by sourcing /root/tools/aglt2-demo-openrc.sh. The cirros image is already uploaded to our image service. I've tested that all of this works though I would next like to make the network node configuration more robust.Dashboard

Yes, that will be nice. I look forward to installing that feature.Upgrading

After setting up Juno, the Kilo release came out. https://wiki.openstack.org/wiki/ReleaseNotes/Kilo Followed sequence of steps here: http://docs.openstack.org/openstack-ops/content/ops_upgrades-general-steps.html- Updated the "rdo-release" rpm on vctl01 to -kilo version (new rpm in our repository).

- Stopped all openstack and cfengine services, and ran "yum update" to update components.

- Went through the installation guide starting with the identity service and modified cfengine policy where necessary. For example, the keystone service changed to an Apache-based wsgi service. Different packages were required, and some additional config for apache was needed. Memcached was added, and a new config section in keystone.conf added for it.

- Components were updated and tested one at a time. Commented out "services:" section of cfengine policy so I could update policy for one piece, apply it, start manually, and verify it was working before moving on to next piece.

- Stopped services, made sure they wouldn't start again (disabled, temporarily changed service policy in cfengine).

- Applied config updates based on install guide (via cfengine policy).

- Installed new configs for apache based service

- Ran '/bin/sh -c "keystone-manage db_sync" keystone' to update database

- Started keystone service, ran some of the verification steps in the guide. Seems good.

| I | Attachment | Action | Size | Date | Who | Comment |

|---|---|---|---|---|---|---|

| |

installguide-neutron-initialnetworks.png | manage | 122 K | 20 May 2015 - 19:33 | BenMeekhof |

Edit | Attach | Print version | History: r7 < r6 < r5 < r4 | Backlinks | View wiki text | Edit wiki text | More topic actions

Topic revision: r7 - 22 Jul 2015, BenMeekhof

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors.

Copyright © by the contributing authors. All material on this collaboration platform is the property of the contributing authors. Ideas, requests, problems regarding Foswiki? Send feedback